Here's an example:

CUDA OFF:

CUDA ON:

Encoding settings are identical in both cases:

NFS (and most of other car-sim games) are technically a scroller-type games. Most of the image you see is changing linearly and has low dynamic. That is why by complexity it can be compared to desktop recording. And this type of tasks are ok for CUDA encoding.Carpenter01 wrote:I dont have this problem, even on youtube, it has almost perfect quality. http://www.youtube.com/watch?v=ZZmHM8fFxPU

I can barely notice the difference between the raw video, and the rendered MP4.

GPU video encoding is much faster than CPU encoding and has the ability to provide higher efficacy. But it's not easy developing software to run on parallel processors.vivan wrote:That's because GPU video encoding is just marketing. They are not capable of using all advanced algorithms that software encoder can use.

So they are fast, but have quality like mpeg-2.

Intel QuickSync is a bit better (in speed and quality) but still software encoders blow it away.

For instance, x264 has preset that has equal speed and quality. Guess what? It called "ultrafast".

Notice that this isnt my only video, i have over 150 videos, (some unlisted) and I havent experienced any kind of this degradation. I used Fraps, Afterburner, Asus Gamer OSD, and now Action. My Youtube Channelapk wrote:NFS (and most of other car-sim games) are technically a scroller-type games. Most of the image you see is changing linearly and has low dynamic. That is why by complexity it can be compared to desktop recording. And this type of tasks are ok for CUDA encoding.Carpenter01 wrote:I dont have this problem, even on youtube, it has almost perfect quality. http://www.youtube.com/watch?v=ZZmHM8fFxPU

I can barely notice the difference between the raw video, and the rendered MP4.

But try to encode some (MMO)RPG-type games where the content of image is changing intensively and randomly (walking and turning the camera around at the same time) and you will see that CUDA-encoder can't handle it the same way as software encoder does.

Check these example files of MMORPG gameplay encoded with and without CUDA acceleration:

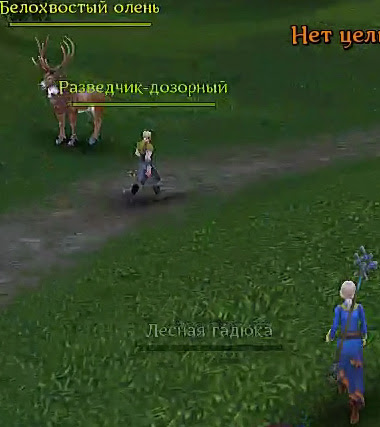

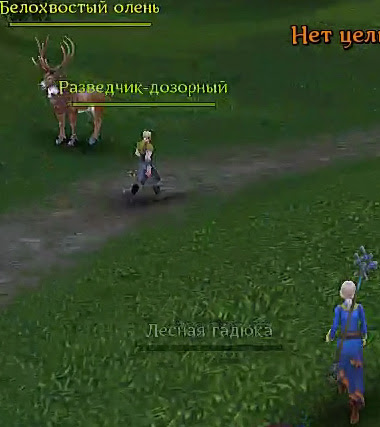

CUDA OFF

CUDA ON

(each file is ~9MB size)

Higher resolution makes the difference even more noticeable.

Are you kidding?Carpenter01 wrote:I dont have this problem, even on youtube, it has almost perfect quality. http://www.youtube.com/watch?v=ZZmHM8fFxPU

I can barely notice the difference between the raw video, and the rendered MP4.

proof?Nielo TM wrote:GPU video encoding is much faster than CPU encoding

In video encoding "efficiency" is quality/bitrate. Hardware encoders are worst here, since software encoders could provide same quality at much (2x-4x) smaller bitrates.Nielo TM wrote:and has the ability to provide higher efficacy.

It's not the main problem. The main problem is GPU's architecture which is very ineffective for any compression.Nielo TM wrote:But it's not easy developing software to run on parallel processors.

Because its from youtube, which downgrade every videos no matter how high the original quality was.vivan wrote:Are you kidding?Carpenter01 wrote:I dont have this problem, even on youtube, it has almost perfect quality. http://www.youtube.com/watch?v=ZZmHM8fFxPU

I can barely notice the difference between the raw video, and the rendered MP4.

And that's 1080p downscaled to 1366x768 (I'm too lazy to download full video to make fullsize screenshot). Everything is blocky and blurry as hell.

Return to “Action! Screen and Game Recorder”

Users browsing this forum: No registered users and 5 guests